Patient Safety and Quality Improvement

We had just finished the composite resection, and it was late in the evening as the case had gotten off to a later start. We were done with the case, the drapes were off, and I had just removed the anode tube to insert the cuffed tracheostomy tube. I pulled on the upper stay suture to pull the trachea into view and it immediately came loose—obviously the tracheal ring had been broken during its placement.

Human Error Is Inevitable—So How Do We Reduce the Risk of Harm?

David E. Eibling, MD; Matthew M. Smith, MD; and members of the AAO-HNS Patient Safety and Quality Improvement Committee

The Event

We had just finished the composite resection, and it was late in the evening as the case had gotten off to a later start. We were done with the case, the drapes were off, and I had just removed the anode tube to insert the cuffed tracheostomy tube. I pulled on the upper stay suture to pull the trachea into view and it immediately came loose—obviously the tracheal ring had been broken during its placement. Undeterred I pulled on the lower stay suture to stabilize the trachea as I attempted to insert the tracheostomy tube, and to my horror, it also pulled out before the tube was successfully passed into the trachea. Filled with a sense of impending doom, I peered into the incision—I could see nothing but pink tissue—and realized I had no idea where the tracheal opening was. There followed a flurry of activity with rising level of pandemonium. The anesthesia resident and I were the only physicians in the room. I asked the scrub tech to quickly find me two Army-Navy retractors in the pile of instruments and the circulator to turn the OR lights back on and call my attending. I asked the anesthesia resident to hand me their suction since ours was already off the field. It seemed like an eternity, but it was probably less than two minutes to get the retractors into the incision, visualize the trachea and pull it up with a double hook, and successfully insert the tracheostomy tube. By the time the two attendings had returned into the room, the airway was secure, and all was well—except for the residual adrenaline rush from the terror I had experienced.

At the time, a quarter century prior to release of the 1999 Institute of Medicine report To Err is Human, pulling out the remaining stay suture while changing a tracheostomy tube would have been interpreted as a sign of a “bad resident.” The term near miss was restricted to aviation and, with a few exceptions, medicine had not yet begun to appreciate that a near miss might someday be a hit.

“No harm, no foul” was the oft-repeated theme—and in fact the event was never presented at our department’s M&M conference. Other than a brief explanation to the attending as he rushed back into the room, there was no attempt to review the event in the context of what might be done to avoid a repeat of the event, with potentially catastrophic consequences for the patient, not to mention the hapless resident and his department.

James Reason, author of the well-known book Human Error, was among the first to emphasize that the capacity for error accompanies all human endeavors, and no one is immune. His well-known Swiss cheese model describes that when errors occur, there are multiple barriers that must be violated between the error and the potential victim. When the holes in sequential barriers line up, the error can then lead to harm. The fishbone diagram we are familiar with helps to visualize the multiple contributing factors leading to an untoward event.

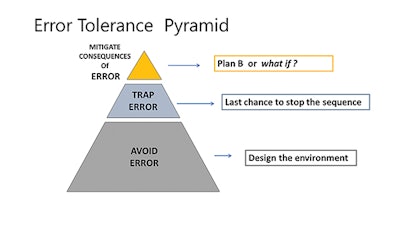

Figure 1. Error Tolerance Pyramid from the teaching materials of the U.S. Department of Veterans Affairs National Center for Patient Safety, 2019 Clinical Team Training Curriculum PowerPoint.

Figure 1. Error Tolerance Pyramid from the teaching materials of the U.S. Department of Veterans Affairs National Center for Patient Safety, 2019 Clinical Team Training Curriculum PowerPoint.The recognition that error is a characteristic of all humans, and that all barriers to harm have holes, informs us that there are no simple fixes. This is not surprising to any of us, as most of us are aware of wrong-site surgery cases that occurred despite a preoperative time out. Hence, interventions to reduce harm must consist of multiple barriers, in the hope that many layers of protection will reduce the chances that human error will result in harm. This is a difficult concept, as considerable effort has been invested in promulgating some new policy that will prevent human error.

The error tolerance pyramid (Figure 1) is one such useful tool that can assist in organizing our thinking regarding what are typically numerous potential interventions. Some are stronger than others, a concept that has been illustrated as different barrier materials in the Swiss cheese diagram—from window screen to reinforced concrete. But even reinforced concrete can be broken. Hence, multiple interventions that function at different levels to stop the error sequence are more likely than a single solution to the challenge of human error. The visualization, utilized in the U.S. Department of Veterans Affairs (VA) National Center for Patient Safety (NCPS) teaching materials on the topic of harm reduction, stratifies interventions into three (overlapping) epochs. The base of the pyramid is the design of the environment and crosses the entire domain of human-technology interface. Over the past 70-80 years, human factor researchers and engineers have taken an active role in studying and designing infrastructure to reduce the likelihood of error occurring.

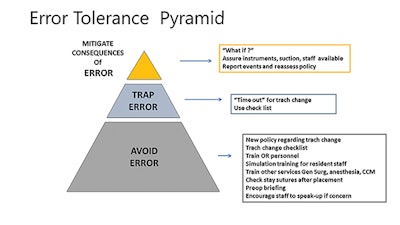

Figure 2. Our case can illustrate the utilization of the three-level harm reduction pyramid.

Figure 2. Our case can illustrate the utilization of the three-level harm reduction pyramid.The midsection of the pyramid addresses interventions that stop the sequence of error. Perhaps the most vivid example in surgical practice is the preoperative time out. It is such an integrated part of practice now that it is surprising to recognize that the step was only introduced about 15 years ago.

The top of the pyramid, and the smallest segment, is our back-up plan that seeks to reduce harm if an error does occur despite design processes developed to interrupt the error sequence. Attention to this step is an integral characteristic of a high reliability organization (HRO). HROs expect that errors and failure will occur and that harm is best mitigated by a back-up plan that has been considered previously.

Applying the error tolerance pyramid to our case illustrates how an appreciation of the possibility of a stay suture pulling out drives not only the development of policy, but also training of others who might be called on to assist in finding the tracheal opening in a fresh incision. The policy could include a requirement for a second time out to ensure that the patient is adequately oxygenated and sedated, and adequate personnel and instruments are available in the room should they be needed.

To summarize, strategies that employ multiple levels of harm reduction barriers will be more successful in preventing harm due to (inevitable) human error. The error tolerance pyramid that is taught by the VA NCPS is a framework that may assist in the development of a multilevel harm reduction program.

Additional Readings:

Institute of Medicine Committee on Quality of Health Care in America. To Err is Human: Building a Safer Health System. National Academies Press; 2000.

Reason J. Human Error. Cambridge University Press; 1990.

Woods D. Behind Human Error. CRC Press; 2010.

Dekker S. The Field Guide to Understanding “Human Error.” CRC Press; 2014.

Excerpted from the AAO-HNSF 2019 Annual Meeting & OTO Experience Panel Presentation by the Patient Safety and Quality Improvement Committee: Near Misses, Never Events, and Just Plain Scary Cases